The industry consensus is clear: use the biggest, most powerful model available. Opus over Sonnet. Sonnet over Haiku. The smarter the AI, the better the work. It feels obvious. It is also backwards.

Introduction

1. The Assumption That Breaks Engineering

I use Claude Haiku almost exclusively. Not because I cannot afford Opus. Not because I lack access. I use Haiku because when I tried the opposite - defaulting to the biggest model first - I became a worse engineer.

This sounds backwards. Bigger models are objectively more capable. They understand nuance better. They make fewer mistakes. They require less precise prompting. So of course they should be faster.

Except they are not.

The pattern I kept hitting was this: I would use Opus for a complex task, get a result in one shot, ship it, move on. Felt productive. But when I started trying Haiku on the same task, something happened. Haiku would fail on my first prompt. I would debug why. I would structure my context better. I would break the problem down. And then it would work - not just work, but often better than the Opus result.

The difference was not the model. The difference was that Opus let me stay vague.

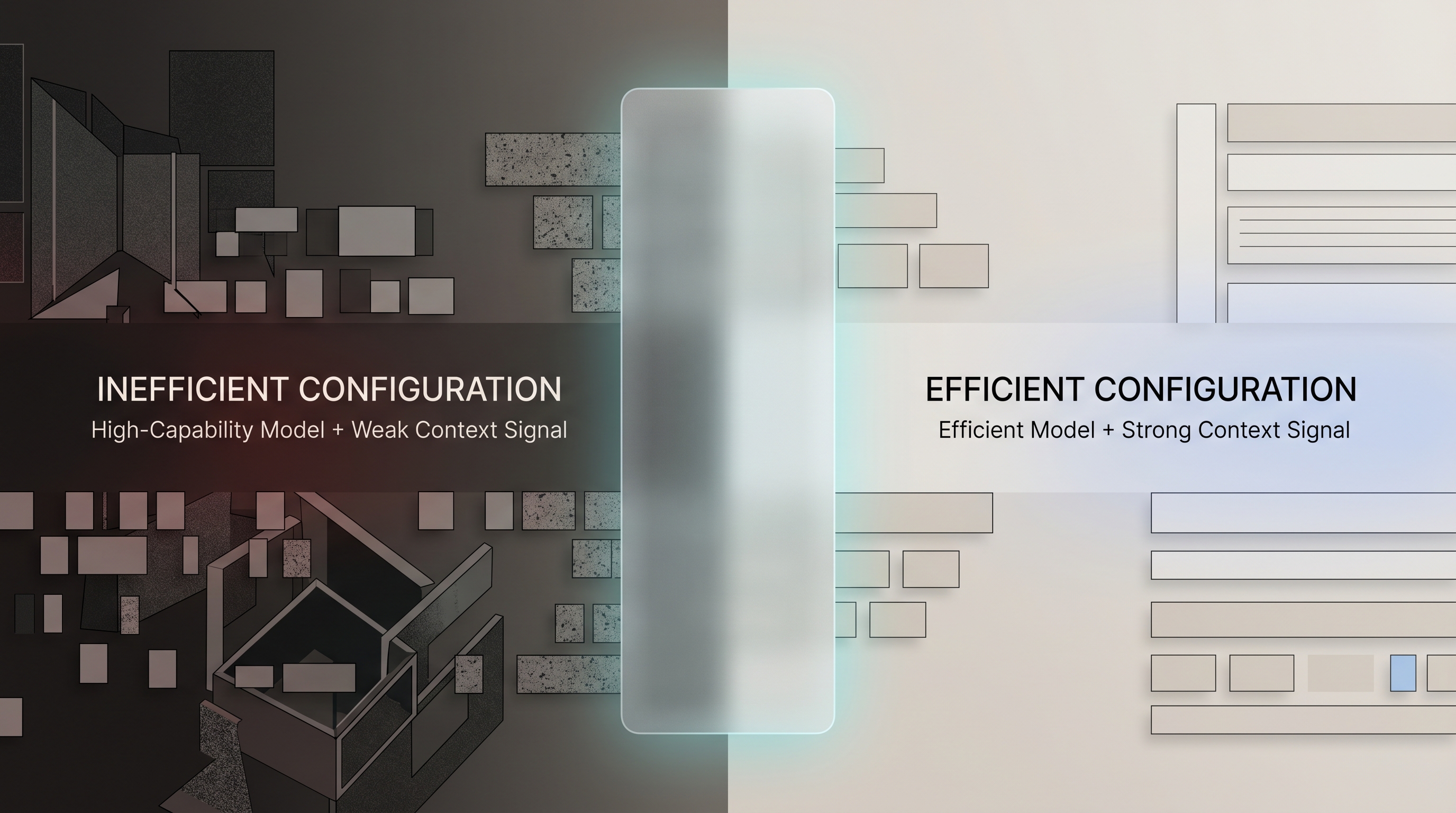

2. The Real Problem: Bigger Models Hide Bad Habits

Opus compensates for sloppy prompting. If you give it a vague specification with chaotic context, it will often figure out what you meant. It will make reasonable assumptions. It will fill in gaps. This feels like intelligence. It is actually just model power masking engineering discipline.

Here is what happens in practice:

Opus workflow:

- Write loose prompt with mixed ideas

- Get a reasonable answer

- Iterate on the output (not the specification)

- Eventually land on something workable

- Never fully understand what you asked for

Haiku workflow:

- Write loose prompt

- Get a confused, partial answer

- Realize your specification was incoherent

- Clarify the specification instead of the output

- Haiku executes cleanly

The Opus path is faster on iteration count. The Haiku path is faster on total time, because you stop wandering and start building.

I noticed this first when building my content strategy system. I had a vague idea: "Help me organize my writing voice into a structured document that AI can use to generate content that sounds like me." I tried prompting Opus to just figure it out. It produced a decent output. But the output was generic. It sounded like a style guide, not like a specification of how I actually think.

Here is what happened:

What I asked Opus:

Help me organize my writing voice into a documentthat AI can use to generate content that sounds like me.→ Generic style guide (not useful)

What Haiku forced me to build:

# VOICE.md## Tone Composition (Weighted)- 50% - Steady, authoritative guide- 25% - Dynamic disruptor- 25% - Bold visionary

## Language Style (Weighted)- 50% - Analytical + analogy-driven- 30% - Technical precision- 20% - Light narrative

## Cadence & Delivery70% - Conversational, spontaneous flow30% - Deliberate, structured emphasis

## Core MetaphorsLearning = Flame (constant, internal)Balance = Reverse SymmetryFocus = Single Thread→ Operational specification (works perfectly)

Then I tried Haiku. It failed immediately. The failure forced me to ask a harder question: what do I actually mean by "voice"? Not tone, not style - voice. The gap between "I know it when I see it" and "here is a precise decomposition" is enormous. Opus had let me skip that gap. Haiku refused.

So I built VOICE.md - a constitutional document that breaks down tone composition by percentage, language style by percentage, cadence, core metaphors, signature patterns. Not aesthetic. Operational. Then I fed that to Haiku, and it executed perfectly. The output was unmistakably me because the spec was unmistakably clear.

Opus might have done the same work. But I would never have written VOICE.md. I would have kept iterating on outputs until Opus eventually landed close enough. I would have optimized for model intelligence instead of engineering clarity.

Bigger models let you think less. Smaller models force you to think clearly.

3. Constraint as a Teacher

Every strong engineer I know works under constraints. Token limits. Latency budgets. Memory footprints. Network costs. These are not nice-to-haves. They are the forcing function that separates good design from bloated design.

The same principle applies to AI workflows. When you work with a constrained model, you are forced to optimize at every layer:

- Prompt clarity: Vague prompts fail on Haiku, so you learn to be precise

- Context structure: You cannot dump entire codebases into context, so you learn to supply only what matters

- Task decomposition: Complex problems cannot be solved in one shot, so you break them into stages

- Feedback loops: You iterate faster because each cycle is cheaper, so you experiment more

- Reusability: Since you cannot recreate context each time, you build context files (.md files, memory systems, tools) that compound

This is not deprivation. This is the path to building real systems instead of one-off interactions.

Compare this to the Opus-first engineer. No pressure to optimize. Context? Dump everything. Prompt clarity? The model will figure it out. Task decomposition? Unnecessary - Opus can handle it in one shot. Reusability? Why build context files when you can just re-prompt each time?

Over time, the Haiku-first engineer builds infrastructure. The Opus-first engineer builds a habit of asking for more.

What does this infrastructure look like? Here is a real example from a project:

# CLAUDE.md - The context your model needs to execute

## Project OverviewStatic site built with Astro (v4.5+). Tech stack: vanilla JS, CSS Grid, no frameworks.

## Data Layer: Blog Metadata**Single source of truth:** src/data/blog-metadata.ts

Exports:- blogPosts array (slug, title, date, category, readingTime, tags, description, ogImage)- getLatestPosts(n) - fetch N most recent posts- getRelatedPosts(slug, n) - fetch N related posts by tag overlap- getAllTags() - all unique tags across all posts

**CRITICAL:** When adding a new blog post, update TWO files:1. src/data/blog-metadata.ts (TypeScript - used by Astro pages)2. scripts/blog-metadata.js (JavaScript - used by RSS and OG generators)

If either is missing, RSS generation and OG images will fail.

## Known Issues & Gotchas⚠️ ai-unlocks-economics missing from metadata - no entry in blog-metadata.ts⚠️ Tailwind CDN loaded for tag pages only (exception to "no frameworks" rule)⚠️ Hardcoded dates in sitemap script - must update LASTMOD_DATES object manuallyThis is not abstract documentation. This is the context that makes Haiku work. Without it, Haiku fails. With it, Haiku executes perfectly every time. That context file is what the constraint forced me to build.

4. Clarity Unlocks Iteration Speed

This is the core insight: iteration speed compounds faster than raw intelligence.

With Haiku, a failed prompt takes 20 seconds and costs $0.002. With Opus, a failed prompt takes 30 seconds and costs $0.05. But the real difference is psychological. At Haiku costs, you never hesitate to experiment. Should I try a different angle? Iterate. Rephrase? Iterate. Test three approaches in parallel? Obviously.

At Opus costs, you optimize for fewer, better requests. You think harder before prompting. You aim for the perfect prompt on the first try. You reduce experimentation because each experiment is expensive.

And yet: do careful one-shot attempts with Opus beat rapid iteration with Haiku?

Empirically, no. I ran a direct test to check my own reasoning. The task: extract a structured content calendar from raw notes, with consistent formatting and category tagging. I ran 10 attempts on each model with progressively refined prompts, tracking success rate (output meets spec without manual correction), time to acceptable output including revisions, and total cost.

| Metric | Haiku (10 attempts) | Opus (10 attempts) |

|---|---|---|

| Success rate (no correction needed) | 60% | 80% |

| Average time to acceptable output | 4 min (incl. revisions) | 6 min (incl. revisions) |

| Total cost (10 runs) | $0.02 | $0.50 |

| Reusable context file produced | Yes - failures forced it | No - Opus compensated without it |

Opus succeeded more often per attempt. But Haiku reached an acceptable answer faster in total time, cost 25x less, and, critically, the debugging process produced a reusable context file I still use today. The Opus path produced nothing durable.

Note on the 60% figure: this reflects unoptimized prompting, before the context file that Haiku's failures forced me to build. After structuring the specification into an operational document (equivalent to VOICE.md), the same task ran at over 90% without manual correction. The 60% is where you start. The context file is the output that changes the number.

I can run 50 Haiku experiments for the cost of 2 Opus runs. If Haiku succeeds even 30% of the time without rework, and I need to refine those wins, I am ahead. If I combine those iterations with better context structure (which the constraint forced me to build), I am way ahead.

The math is simple: iteration velocity beats intelligence when intelligence is high enough. And Haiku is intelligent enough for 90% of real work.

The remaining 10% - deep reasoning, multi-step mathematics, novel research synthesis - those are Opus domains. But most of what engineers build is not in that 10%. It is coordinating systems, writing code, debugging, structuring data, clarifying ideas. Haiku handles all of that. And with unlimited iteration, Haiku gets you to the answer faster.

5. System Design Beats Model Choice

Here is what I learned optimizing token usage across large-scale AI projects: the model choice contributes maybe 15-20% to outcome quality. The system design contributes 60-70%.

System design is:

- Prompt structure: How you frame the task, break it down, provide examples

- Context preparation: Which files, specifications, or history you include (and which you omit)

- Tool integration: What the model has access to (APIs, databases, file systems)

- Feedback loops: How you validate output and reiterate

- Stateful workflows: How you carry forward insights from one run to the next

I see this pattern across my own projects. The hyperoptimize-claude-code article documents 16 optimization strategies. Exactly one of them is "use a smaller model." The rest are system design: context indexing, task decomposition, tool-first workflows, memory systems, MCP integration.

A well-designed system with Haiku outperforms a poorly-designed system with Opus. Every time.

Here is what the difference looks like in practice:

## Haiku-First Approach: Decomposed Workflow

Stage 1: Analysis (Haiku)Analyze this blog post for:- Core argument- Supporting evidence- Implicit assumptions- Potential objections

Output: Structured analysis in JSON

---

Stage 2: Outline Creation (Haiku)Given the analysis, create an outline:- Main sections (3-5)- Subsections for each- Key transition points- Where to add examples

Output: Markdown outline with anchors

---

Stage 3: Content Expansion (Haiku)Given the outline, write each section:- Section 1: Introduction (300 words max)- Section 2: Core argument (500 words)- ...repeat for each section

Output: Drafted article

---

Stage 4: Refinement (Opus only if needed)Polish section 3 for clarity and depth.

Cost: $0.002 + $0.002 + $0.003 + $0.03 = $0.037Time: 2 min + 1 min + 3 min + 2 min = 8 min total

vs.

## Opus-First Approach: One-Shot"Write a comprehensive blog post about [topic]"→ Gets a result→ Iterate on output (not specification)→ Never fully understand what you asked for→ Repeat next month with same effort

Cost: $0.05Time: 5 min + 15 min revision = 20 min totalThe Haiku approach takes 8 minutes and costs pennies. The Opus approach takes 20 minutes and costs more. But the real difference is deeper: the Haiku approach forces you to break down the problem into stages. That decomposition is reusable. Next time you write about a different topic, you have a system. With Opus, you start from scratch.

The constraint of working with Haiku forces you to build these systems. You cannot skip context preparation when the model will fail otherwise. You cannot avoid task decomposition. You cannot ignore tool integration. The system design becomes mandatory, not optional.

Opus lets you skip to the result. Haiku forces you to build the machine that produces results.

6. Cost Pressure Distorts Engineering Behavior

This one is subtle but important: expensive models change how you think.

With Opus ($15 per 1M input tokens), every request feels like a commitment. You feel the need to make it count. You carefully structure the prompt. You review the context twice. You hesitate to experiment because "what if this doesn't work?" You settle for "good enough" rather than trying new approaches.

This is rational cost management. It is also mediocre engineering.

The counterargument is worth naming: isn't Opus-driven hesitation just quality control? If you structure the prompt carefully and think hard before sending - isn't that good engineering discipline, regardless of what's driving it?

There are two kinds of hesitation. Hesitation driven by cost anxiety optimizes for fewer API calls. Hesitation driven by specification clarity optimizes for understanding what you actually need before asking for it. The first is cost management. The second is engineering discipline. They look identical from the outside and produce completely different outputs. Haiku forces the second kind - when it fails, the failure surfaces a gap in your specification, not a gap in the model's capability. That distinction is what makes it a teacher rather than just a cheaper option.

With Haiku ($0.80 per 1M input tokens), none of that matters. Experiment? Sure, costs $0.001. Try a completely different approach? Go ahead. Run four variations in parallel? Obviously. Iterate on something exploratory? No friction.

The low cost removes decision anxiety. And low decision anxiety enables exploration. And exploration is where the best insights come from.

I am not saying throw money away on random experiments. I am saying: when the cost approaches zero, your optimization function should not be "how do I minimize API calls." It should be "how do I explore the solution space fastest."

Most teams do the opposite. They see AI costs rising and respond by being more conservative, not less. They cut back on experimentation to save money. This is short-term cost control. It is long-term incompetence.

Cost pressure teaches you to think smaller. Cheap models teach you to think bigger.

A clarification worth making: this is not a case for using AI everywhere or ignoring inference costs. AI Pricing Is Fake argues that current prices are subsidized and your architecture should be designed for real costs. Those two arguments point the same direction, route inference to the cheapest model that can do the job. Haiku-first thinking gets you the exploration benefit at low cost; cost-aware architecture design ensures you don't build a system that only works when inference is cheap. The same principle, applied at two different levels.

7. The Fragility of Power

Here is a hard truth: if your system only works on the most powerful model, your system is fragile.

What happens when Opus hits rate limits? You wait. What happens when you need to run this at scale and Opus becomes unaffordable? You are stuck. What happens when a new, smaller model becomes available that costs 10% as much? You cannot take advantage of it - your prompts only work on Opus.

Contrast this with a system built on Haiku. If a better, smaller model comes out, you migrate for free. If you hit rate limits, you have unlimited fallback capacity. If you need to run at scale, costs are negligible. If Opus becomes useful for one specific stage of your pipeline, you can use it surgically without depending on it.

A Haiku-first system is robust. It works across model versions, cost tiers, and constraint levels. An Opus-first system is fragile. It depends on a single point of capability.

Building for constraint teaches you to build systems that work across a range of conditions, not just optimal conditions. That is good engineering.

8. Becoming a Better Engineer Through Constraints

Step back and look at what the constraint teaches you:

- Clarity over cleverness: Vague prompts fail, so you learn to be explicit

- Structure over raw power: Complex tasks fail in one shot, so you learn to decompose

- Systems over interactions: Repeated manual prompting fails, so you build reusable context

- Iteration over perfection: Each attempt is cheap, so you experiment rather than overthink

- Robustness over optimization: You cannot rely on model power, so you build for multiple conditions

These are not compromises. These are the principles of good engineering. The constraint of working with a smaller model aligns incentives toward these principles.

I notice this in teams I work with. The engineers who use Haiku build better prompting practices. They build better documentation. They build better reusable context files. They experiment more and ship faster. The engineers who default to Opus often have sloppy context, loose prompts, and slow iteration.

The model choice is a proxy for engineering discipline.

9. How to Build Like This

If this resonates, here is how to start. The key is to build a foundation that makes Haiku reliable. That foundation is your context files.

Here is a template you can copy and adapt to your own work:

# CONTEXT.md - Your Haiku-First Foundation

## Part 1: Specification (Required)What is the problem you are solving?

Example: "Build a blog post about AI engineering practices that educates readers and converts them to consulting."

## Part 2: Constraints (Required)What are your limits?- Time budget: 2 hours- Token budget: 50K input tokens- Dependencies available: VOICE.md, AUTHORS.md, analytics data- What you cannot touch: existing published posts, homepage

## Part 3: Success Criteria (Required)How do you know it worked?- Measurable output: 3000+ words, 12-15 min read- Quality bar: Matches VOICE.md tone, includes 2+ real examples- Edge cases that matter: Must link to existing posts, must avoid hype

## Part 4: Feedback Loop (Required)How do you iterate?- What indicates failure: Output is generic, tone is off, no examples- What validates success: Read it aloud, does it sound like you?- When to escalate to Opus: If you need novel research synthesis or deep math

---

## Stage Decomposition

Stage 1: Analysis (Haiku)- Input: Blog topic, target audience, success criteria- Task: Break down the topic into core arguments- Success: 5-7 main points with supporting detail- Output: Structured outline

Stage 2: Context Preparation (Haiku)- Input: Outline, VOICE.md, related posts- Task: Gather relevant context, prior examples, citations needed- Success: All citations verified, related posts linked- Output: Reference document for stage 3

Stage 3: Draft (Haiku)- Input: Outline, context, stage 1 analysis- Task: Write each section in order- Success: 3000+ words, all sections covered- Output: Raw draft

Stage 4: Polish (Opus if needed)- Input: Draft, VOICE.md- Task: Refine unclear sections, strengthen arguments- Success: Reads like you, compelling, no filler- Output: Final version ready to publish

---

## Cost & Time EstimatesStage 1: $0.002, 2 minStage 2: $0.001, 3 minStage 3: $0.004, 5 minStage 4: $0.02, 3 min (Opus only)

Total: ~$0.03, ~13 min(One-shot Opus: $0.05, ~20 min + revision time)Now that you have a template, here is how to use it:

- Start with Haiku. Make it your default. You will hit friction points. Good. That friction tells you where to invest in clarity.

- Build context files. VOICE.md, ARCHITECTURE.md, PATTERNS.md, CONTEXT.md - whatever your domain needs. These are the infrastructure that makes small models work.

- Decompose aggressively. Instead of one big prompt, break it into stages. Stage 1 is analysis. Stage 2 is planning. Stage 3 is execution. This works on Haiku and scales on Opus.

- Iterate without guilt. Cost is negligible. Explore. Try different angles. Fail fast. The iterations will compound.

- Use Opus surgically. When you hit a stage that genuinely needs deep reasoning or novel synthesis, use Opus for that stage only. Keep everything else on Haiku.

- Build reusable systems. Tools, MCP servers, memory files, prompt libraries. The constraint forces you to do this anyway. Lean into it.

10. Closing: The Constraint Is the Feature

I could end this with "Haiku is actually just as good as Opus," but that would be missing the point. Haiku is not as good. Opus is more capable. But capable is not the same as productive.

The constraint of working with a smaller model forces you to become a better engineer. You build clearer specifications. You structure context better. You decompose tasks. You iterate faster. You build systems instead of asking for bigger power.

And then something interesting happens: your Haiku-first systems work. They work well. They work fast. And when they need to scale or face new problems, they scale because they were built on principles, not on raw capability.

The engineers I most respect are not the ones who work with the most powerful tools. They are the ones who build systems that work within constraints. That is the kind of engineer I want to be. And Haiku - the constraint it imposes - is how I get there.

Bigger models do not make you a better engineer. Smaller models do. The constraint is the feature.

Conclusion

The most successful AI workflows aren't built by adding AI on top of broken processes. They're built by rethinking the process itself through the lens of what AI makes possible.

Working through the challenges in this post? I help engineering leaders and CTOs navigate complex technical decisions and scale high-performing teams. Schedule a consultation →