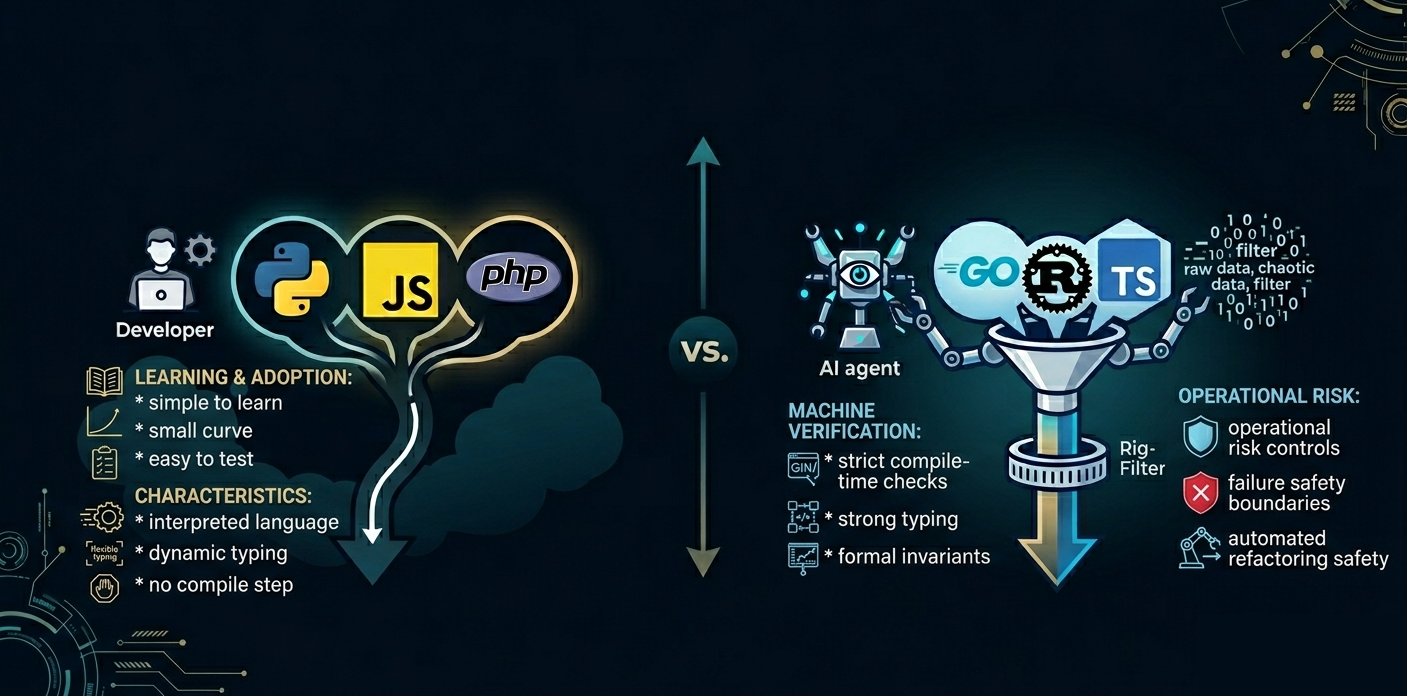

For nearly thirty years, mainstream programming language adoption was driven by one thing: developer convenience. AI changes that equation entirely.

The Historical Pattern: Humans Optimized for Convenience

For three decades, language choice was straightforward. Python succeeded because it reduced friction. JavaScript succeeded because it was unavoidable. PHP succeeded because deploying web apps became trivial. Ruby succeeded because Rails massively accelerated startup velocity.

In nearly every case, developer ergonomics outweighed theoretical correctness.

This made sense. Human developers are expensive. Reducing cognitive load matters. Iteration speed matters. Hiring pools matter.

The tradeoff was simple: sacrifice strict compile-time guarantees for rapid iteration, runtime flexibility, and minimal boilerplate. Correctness was achieved through testing, CI pipelines, code review, monitoring, and defensive engineering practices.

The language itself was often not the primary safety mechanism.

AI Changes the Optimization Function

AI-assisted development fundamentally alters this balance.

The reason is straightforward: AI systems can already generate enormous amounts of syntactically correct code extremely quickly.

Google reported in 2025 that 75% of newly written code internally is AI-generated and later reviewed by engineers. OpenAI stated that code generation has moved from roughly 20% to approximately 80% of developer work in only months. Industry analysis suggests we're approaching a threshold where most new code is machine-generated.

Once code generation becomes cheap, abundant, and partially autonomous, the engineering problem shifts.

Verification becomes more important than generation.

And this is exactly where strongly-typed, compiled languages shine.

Why Dynamic Languages Become Risky at Scale

Dynamic languages are incredibly effective when humans directly steer the system. Python exemplifies this: minimal boilerplate, runtime flexibility, fast experimentation, readability. Those are human-centric advantages.

But they come with tradeoffs: runtime type failures, hidden contracts, late error discovery, reduced refactor safety, and increased reliance on tests.

When a human writes the code, these costs are manageable because humans understand intent implicitly.

AI does not.

LLMs generate statistically plausible code. They do not possess semantic understanding of system architecture. That means subtle interface mismatches, incorrect assumptions, missing invariants, and hidden edge cases become far more dangerous at scale.

The Problem Demonstrated: Python

Consider a payment processing function. The requirements: process a payment and return the updated balance.

Here's a Python implementation:

def process_payment(user_id, payment): user = db.users.get(user_id) new_balance = user.balance - payment['amount'] return {'status': 'success', 'balance': new_balance}The code looks reasonable. It runs. Tests pass. You deploy to production.

Then a customer's payment processes, but the response never arrives. The API times out. Your monitoring shows the function executed successfully—it returned a status of success—but something broke downstream.

The bug is subtle: the attribute is balance, not account_balance. Python raises an AttributeError at runtime, but only when that line executes. If the code path isn't tested, you never catch it until production.

Here's what the actual error looks like:

Traceback (most recent call last): File "/app/payments.py", line 52, in process_payment new_balance = user.balance - payment['amount']AttributeError: 'User' object has no attribute 'balance'This is the core problem with AI-generated Python at scale: errors hide until execution.

The Same Problem in Go

Now consider the same logic in Go:

type User struct { ID string AccountBalance float64}

func processPayment(userID string, amount float64) (float64, error) { user := db.Users.Get(userID) newBalance := user.AccountBalance - amount return newBalance, nil}If the code tries to access a field that doesn't exist, the compiler stops you immediately. Go catches the error at compile time. No deployment. No production incident.

Here's what happens if you try to use the wrong field name:

./payments.go:52:23: user.Balance undefined (type User has no field or method Balance)./payments.go:52:23: cannot use user.Balance (type string) as type float64 in assignmentGo's stronger type system acts as an automated reviewer, continuously validating assumptions before code ever runs.

TypeScript: The Bridge Language

But there's a third path. TypeScript:

interface User { id: string; accountBalance: number;}

async function processPayment(userId: string, amount: number): Promise<number> { const user: User = await db.users.get(userId); const newBalance = user.accountBalance - amount; return newBalance;}You define interfaces that describe your data shapes, specifying which fields are required and their types. When the payment processor accesses those fields, TypeScript's compiler verifies they exist and have the correct types. Incorrect field access is caught before deployment—but without Go's upfront struct verbosity.

Try to use the wrong field name in TypeScript:

src/payments.ts:52:30 - error TS2339: Property 'balance' does not exist on type 'User'.Did you mean 'accountBalance'?

52 | const newBalance = user.balance - amount; | ~~~~~~~TypeScript's rise is not accidental. It succeeded because large systems became difficult to reason about, AI tooling amplified code volume, and organizations needed safer refactoring. TypeScript proved that incremental strictness is more valuable than raw velocity.

Compilers as Supervisory Systems

In AI-assisted development, the compiler increasingly becomes a supervisory system—a continuous automated reviewer validating assumptions.

This changes the role of the language itself. Instead of being merely a tool for expression, languages increasingly become:

- Verification frameworks that enforce contracts

- Constraint systems that restrict invalid states

- Safety boundaries for autonomous agents

The 2025 Stack Overflow Developer Survey showed that Rust—the most strictly typed mainstream language—remained the most admired language among developers, with 72% wanting to continue using it. At the same time, languages emphasizing correctness and strong typing (Rust, Gleam, Zig, Scala, TypeScript) are seeing growing enthusiasm despite smaller market share.

Research Validates the Risk

Academic evidence increasingly validates this concern. A 2025 large-scale study analyzing more than 500,000 code samples found AI-generated code exhibited more high-risk security vulnerabilities, increased use of unsafe patterns, and distinct maintainability risks.

Another 2025 security study found that LLMs frequently failed to adopt modern security practices and often produced outdated implementations.

Veracode research reported that roughly 45% of AI-generated code samples contained security flaws.

This doesn't mean AI-generated code is unusable. It means the industry increasingly needs automated correctness guarantees. And compile-time enforcement is one of the strongest mechanisms available.

The Emerging Architecture

The ideal future architecture for AI-generated software may look like this:

- LLMs generate implementation

- Type systems validate contracts

- Formal verification validates invariants

- CI pipelines validate integration

- Humans validate intent

In this model, the compiler becomes one of the primary defensive systems against AI hallucinations. Failures surface immediately, not in production.

Why Python Still Won't Disappear

This does not mean Python disappears. In fact, Python may grow even more dominant in AI orchestration, data science, rapid prototyping, and agent coordination. Python's ecosystem advantage is overwhelming.

But the split becomes clearer:

- AI orchestration & data science: Python

- High-assurance systems: Rust

- Enterprise backend: Go, Java, Kotlin

- Browser ecosystem: TypeScript

- Systems programming: Rust, C++

Python remains the "language of ideas." Stricter languages increasingly become the "language of guarantees."

The Cultural Shift

The deepest shift may not be technical. It may be cultural.

For decades, developer productivity meant: "How quickly can humans write software?"

The next decade may redefine it as: "How safely can humans supervise software written by machines?"

Under that model:

- Strictness becomes a feature, not a burden

- Compilers become strategic infrastructure

- Type systems become operational risk controls

The industry may gradually shift from "make writing code easier" toward "make verifying generated code safer."

And if that happens, languages once considered "too strict" may become exactly what the AI era requires.

Working through the challenges in this post? I help engineering leaders and CTOs navigate complex technical decisions and scale high-performing teams. Schedule a consultation →