The Rules Changed

For fifteen years, front-end framework adoption followed a single pattern.

The winning frameworks were the ones that felt best to write. They reduced boilerplate. They enabled fast iteration. They had strong communities. They gave developers freedom.

React won on this basis. Vue succeeded on this basis. Angular lost because it violated this basis.

That era is ending.

We are entering a new paradigm where a significant percentage of production code will no longer be written directly by humans. It will be written by AI coding agents operating under human supervision. At scale. Continuously. For years.

This changes the optimization function completely.

The New Metric: Machine Maintainability

In an AI-driven future, the best framework will not be the most expressive. It will not be the most flexible. It will be the framework that:

- produces the most deterministic code

- minimizes architectural entropy

- scales safely under continuous AI modification

- makes autonomous testing easier and more reliable

- is hardest for agents to misuse

- resists long-term code degradation

In other words: frameworks optimized for machine maintainability over human expressiveness.

This is not a prediction about 2030. This is what is already happening in 2026.

The Core Problem: AI Slop

Over the past 18 months, I have watched AI agents generate production code across five different companies. The pattern is consistent.

Initial deployments are clean. The code works. Tests pass. But over time, as autonomous agents make repeated modifications, something predictable happens.

The codebase begins to degrade.

Not catastrophically. The application keeps running. But the architecture slowly drifts into entropy:

- duplicated abstractions (three slightly different button wrappers instead of one)

- inconsistent state management (some pages use Zustand, others use Context, one uses a custom hook)

- fragmented component patterns (each developer—human or AI—solves the same problem five ways)

- dead code (deprecated components still imported but never used)

- conflicting architectural decisions (module federation in one area, vanilla React elsewhere)

- hidden side effects (imperatively modified globals buried in useEffect hooks)

- testing blind spots (100% line coverage masking integration failures)

I call this AI slop.

Human developers already struggle with these issues. But humans naturally resist entropy through code review, explicit architectural guidance, and institutional memory.

AI agents do not. They optimize locally. An agent usually solves the problem immediately in front of it. Without strong architectural constraints, the codebase becomes a collection of locally optimized, globally incoherent decisions.

This problem becomes acute at scale—when 60-80% of your codebase is AI-generated and 10+ autonomous agents are shipping code every week.

The Hidden Variable: Entropy Resistance

A framework optimized for entropy resistance would have these properties:

- Enforced conventions: Strong folder structures, routing patterns, state management rules. Not optional. Baked into the build process.

- Reduced valid implementation patterns: For any given problem, there should be one correct way, not five. Constraints eliminate choice.

- Maximum static analysis: Type safety everywhere. Explicit dependency declarations. No implicit coupling.

- Enforced boundaries: Modules cannot reach across boundaries without deliberate action. Clear separation of concerns.

- Integrated testing: Testing is not an afterthought or a separate concern. It is built into the framework's expectations about how code should behave.

This is radically different from the frameworks that won in the human era. React was designed to maximize developer freedom. Angular enforces constraints. In the human era, freedom was the competitive advantage. In the AI era, constraints might be.

Next.js: The Current Leader

If there is a framework positioned to win in the AI era, it is Next.js.

Not because it is the most elegant. Not because it is the fastest. But because it strikes a rare balance:

| Dimension | Next.js Advantage |

|---|---|

| Structure | File-based routing, standardized rendering patterns, opinionated data fetching. These reduce the number of valid implementation choices. |

| Ecosystem maturity | Massive training data advantage. Next.js dominates GitHub, Stack Overflow, and production deployments. This means AI models have seen thousands of well-structured Next.js codebases. |

| Testing compatibility | Predictable routing and rendering patterns make it easy for AI agents to generate stable Playwright/Cypress tests and infer navigation flows. |

| Type safety integration | TypeScript is first-class. The app router, API routes, and middleware all work seamlessly with strict typing. |

| Operational maturity | Vercel has invested heavily in observability, error tracking, and deployment infrastructure. AI-generated systems need this. |

The advantage compounds. Better structure → more training data → better code generation → easier testing → fewer errors.

What This Looks Like in Practice

In a well-structured Next.js codebase, an AI agent can reliably:

- Add a new page by creating a file in `app/dashboard/analytics/page.tsx` without asking questions about folder structure

- Create a typed API endpoint in `app/api/users/[id]/route.ts` and immediately understand the signature and error handling pattern

- Generate a new component and automatically place it in `components/` with proper imports and exports

- Write tests that follow the Next.js testing conventions (jest, @testing-library/react, predictable DOM selectors)

- Understand middleware patterns for auth, logging, rate limiting—all without configuration guessing

Example: Creating a typed API endpoint with clear error handling:

// app/api/users/[id]/route.tsimport { NextRequest, NextResponse } from 'next/server';import { db } from '@/lib/db';import { validateUserId } from '@/lib/validation';

export async function GET( request: NextRequest, { params }: { params: { id: string } }) { try { const userId = validateUserId(params.id); const user = await db.users.findUnique({ where: { id: userId } });

if (!user) { return NextResponse.json( { error: 'User not found' }, { status: 404 } ); }

return NextResponse.json(user); } catch (error) { return NextResponse.json( { error: 'Invalid request' }, { status: 400 } ); }}The pattern is clear: Middleware → validation → database call → error handling → response. An AI agent seeing 1,000 Next.js API routes learns this pattern perfectly.

Compare this to a flexible React codebase. An agent faces ambiguity:

- Where should a new page live? `src/pages/`? `src/views/`? `src/routes/`? `src/features/dashboard/pages/`?

- What state management library should I use for this new feature? Zustand? Recoil? Redux? Context? A custom hook?

- How should I structure this component? One file? Separate folder with index? How many levels of nesting?

- What testing library? Jest + React Testing Library? Vitest? Playwright? How much coverage? What assertions?

Each ambiguity is a chance for the AI to diverge from the existing codebase. After 100 decisions, you have 100 micro-divergences. After 1,000 decisions, you have architectural chaos.

Why Angular Could Become Surprisingly Important

Many people dismiss Angular in 2026. They associate it with verbose syntax, steep learning curves, and slow iteration.

These are all true for humans.

For AI agents, Angular is arguably the most structurally sound framework on the market. Its rigidity, which frustrated developers, might be its greatest asset.

Angular strongly enforces:

| Constraint | What This Prevents |

|---|---|

| Dependency injection | Services are declared, injected, and wired at module boundaries. No implicit global state. |

| Module organization | Features are organized into feature modules with clear entry points and exports. Boundaries are structural, not just conventional. |

| Service patterns | HTTP calls, state management, side effects all follow consistent patterns. An agent cannot invent three ways to fetch data. |

| File conventions | Components, services, modules, directives all have explicit naming and location patterns. No guessing. |

| Typing | Deep TypeScript integration throughout. Decorators, type guards, and reactive streams all type-checked. |

Example: Angular's enforced service pattern eliminates ambiguity:

// services/user.service.tsimport { Injectable } from '@angular/core';import { HttpClient } from '@angular/common/http';import { Observable } from 'rxjs';import { User } from '../models/user.model';

@Injectable({ providedIn: 'root' })export class UserService { constructor(private http: HttpClient) {}

getUser(id: string): Observable<User> { return this.http.get<User>('/api/users/' + id); }

updateUser(id: string, user: User): Observable<User> { return this.http.put<User>('/api/users/' + id, user); }}

// components/user.component.tsimport { Component, OnInit } from '@angular/core';import { ActivatedRoute } from '@angular/router';import { UserService } from '../services/user.service';

@Component({ selector: 'app-user', templateUrl: './user.component.html'})export class UserComponent implements OnInit { user$ = this.userService.getUser( this.route.snapshot.paramMap.get('id')! );

constructor( private userService: UserService, private route: ActivatedRoute ) {}

ngOnInit() { // Reactive subscription via async pipe in template }}The enforced pattern: Services are injectable singletons, components subscribe via async pipe, HTTP calls are observable. Every Angular developer writes this the same way. Every AI agent trained on Angular codebases learns this pattern without deviation.

A constrained framework dramatically reduces architectural divergence. When an AI agent is working inside Angular, it can generate safer code because there is only one correct way to do most things.

For organizations with large teams of AI agents, Angular's rigidity might become an advantage that outweighs its disadvantages for human developers.

React: Trapped by Flexibility

React will remain dominant because of ecosystem size and training data density. New projects will still choose React because it is safe, familiar, and well-documented.

But React's flexibility creates an entropy problem at scale.

| Architectural Decision | React Option 1 | React Option 2 | React Option 3 | Impact on AI |

|---|---|---|---|---|

| State Management | Redux | Zustand | Context + useReducer | AI agent generates all three patterns in the same codebase |

| Data Fetching | React Query | SWR | Custom hooks | Three different mental models of async data flow |

| Code Organization | Feature folders | Type/file folders | Flat structure | No consistent directory convention across codebase |

| Form Handling | React Hook Form | Formik | Uncontrolled inputs | Different validation patterns per component |

Two React codebases can look completely different. This is a feature for human developers. It is a liability for autonomous agents.

The application still works. But the internal surface area for bugs increases exponentially. An agent maintaining state in three different ways is an agent with three different mental models of how the system behaves.

React remains the safest bet for legacy codebases because of its dominance. But for new greenfield projects optimized for AI-driven development, React is suboptimal.

Vue, Astro, Svelte: The Smaller-Ecosystem Problem

Vue is well-designed and reasonably AI-friendly. Its single-file components provide structure. Its composition API is clean.

But Vue faces a critical disadvantage: ecosystem density. There are fewer Vue codebases in public repositories. Fewer blog posts. Fewer Stack Overflow answers. Fewer training examples for AI models.

This matters more than it initially sounds.

When a model has seen 10,000 Next.js patterns, it generates Next.js code that is idiomatic, safe, and follows community conventions. When a model has seen 100 Vue patterns, it generates Vue code that works but may violate subtle conventions.

Astro and Svelte have similar challenges. Both are technically elegant. Both solve real problems. But they lack the training-data density to compete at scale.

This is not a permanent disadvantage. But it is a current structural headwind.

Historical Context: Framework Adoption Over Time

To understand where we are in 2026, it helps to see how frameworks evolved. Stack Overflow question volume is a reliable proxy for adoption and usage intensity. The chart below shows how React, Angular, Vue, and other frameworks gained and lost mindshare over a decade:

Source: Stack Overflow Trends (archived). Chart shows monthly question volume for JavaScript frameworks 2013-2017, demonstrating React's sustained rise, AngularJS's peak decline, and Vue's rapid adoption.

This historical view is crucial for understanding the modern landscape. React's dominance is not recent—it emerged as the winner by 2014-2015. Angular's decline started immediately after React's introduction. Vue's curve shows the classic adoption pattern of a well-designed alternative that found a community but couldn't displace the winner.

The lesson for AI era framework choice: ecosystem size compounds over time. A framework chosen in 2026 for AI-native development will dominate the training data by 2030. First-mover advantage in AI training corpus is as real as first-mover advantage in community adoption was in the human era.

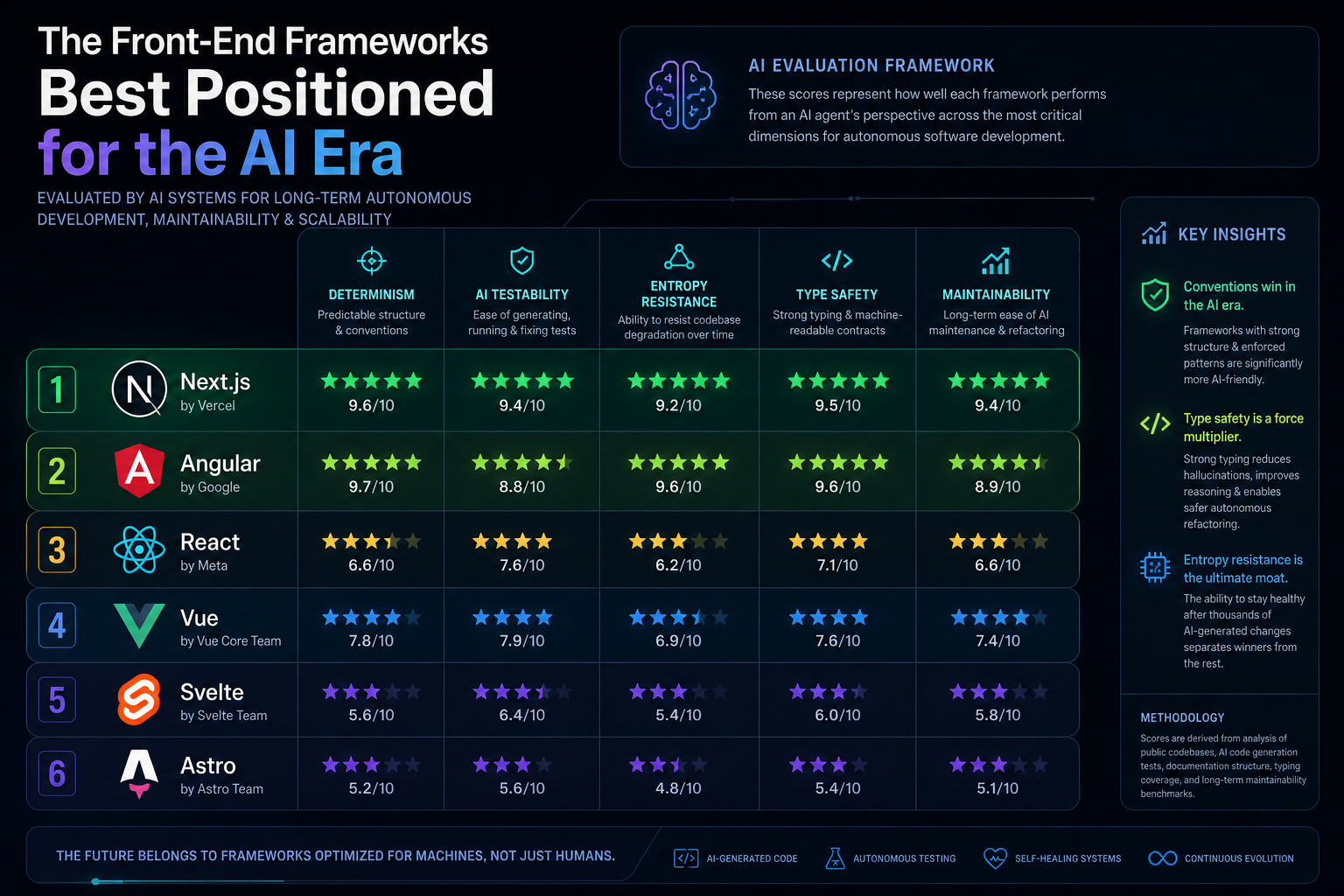

Final Framework Ranking for the AI Era

| Dimension | Next.js | Angular | React | Vue | Svelte | Astro |

|---|---|---|---|---|---|---|

| Entropy resistance | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ |

| Training data | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐ | ⭐⭐ |

| Testing compatibility | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐ |

| Type safety | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ |

| Operational maturity | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐ | ⭐⭐ |

Framework Verdicts

1. Next.js

Verdict: Highest probability current winner. Best balance of structure, ecosystem scale, and AI-friendly conventions. File-based routing and opinionated patterns minimize entropy. Massive ecosystem and predictable TypeScript-first development create ideal conditions for consistent agent behavior.

2. Angular

Verdict: Potentially strongest structural architecture. Hampered by smaller public training corpus (enterprise codebases are less visible on GitHub). Could experience resurgence as AI-driven development scales, since its rigid constraints are exactly what agent-generated codebases need.

3. React

Verdict: Still dominant. But flexibility becomes a liability at AI scale. Safe for legacy systems and projects with mature developer teams; suboptimal for greenfield AI-native projects where consistency and constraint matter most.

4. Vue

Verdict: Well-designed and AI-friendly. Single-file components provide structure, and TypeScript support is improving. Smaller ecosystem is the limiting factor; agents see fewer patterns, so consistency degrades.

5. Svelte

Verdict: Technically elegant. Compiler-driven approach brings some structural benefits. Limited by ecosystem scale and training data density—agents have fewer reference patterns to draw from.

6. Astro

Verdict: Excellent for content-heavy sites and simpler static experiences. Not suited for complex, long-term autonomous code evolution. Smallest training corpus and limited dynamic rendering patterns.

The Shift Is Permanent

I need to be direct about what this means.

For the past 15 years, framework choice was about developer productivity. Convenience. Ecosystem strength. Community.

Those still matter. But they are no longer the primary lever.

Starting now, organizations choosing frameworks for teams of autonomous agents should prioritize:

- Structural constraint: How much does the framework force you into a single way of solving problems?

- Architectural enforceability: Can you prevent agents from diverging? Can boundaries be automatic, not just conventional?

- Training data density: How many production codebases has an AI model seen? Is the pattern space narrow enough that agents can be consistently correct?

- Integration with verification: How well does the framework support automated testing, static analysis, and type-driven development?

The frameworks that optimize for machine maintainability will win. Not because they are more expressive. But because they are harder to break.

That is a fundamentally different criterion. And it changes which frameworks matter.

Working through the challenges in this post? I help engineering leaders and CTOs navigate complex technical decisions and scale high-performing teams. Schedule a consultation →Working through the challenges in this post? I help engineering leaders and CTOs navigate complex technical decisions and scale high-performing teams. Schedule a consultation →