The moment a product manager asks for global search, teams reach for Elasticsearch. Most of them don't actually need it.

The Mistake

One of the most common mistakes engineering teams make when building search is reaching for Elasticsearch far too early.

The moment a product manager says "we need global search," the architecture diagrams start expanding: Elasticsearch clusters, sync workers, indexing pipelines, queue systems, retry logic, infrastructure monitoring, mapping management, reindexing jobs.

And suddenly, a relatively straightforward feature has turned into an entirely separate platform.

The reality is that for a huge number of SaaS applications, PostgreSQL already gives you everything you need to build a fast, relevant, production-grade global search experience.

If your application already uses Postgres — and most do — you can often ship global search in days instead of months, without introducing another distributed system into your stack.

What PostgreSQL Actually Gives You

PostgreSQL includes built-in full-text search capabilities that are surprisingly powerful:

- Relevance ranking with weighted fields

- Phrase search and boolean operators

- Highlight snippets for context

- Fast indexed lookup via GIN indexes

- Language-aware stemming and query parsing

- Modern search syntax (phrases, OR operators, negation)

And critically: it runs directly inside the database you already operate. No synchronization layer. No duplicate infrastructure. No eventual consistency issues between your app database and your search engine.

That simplicity matters more than most teams realize.

Step 1: Building Searchable Tables

The core idea is simple: create a searchable representation of each row using a tsvector.

For example, a hotel table might include name, city, description, and amenities. But not all fields are equally important for search. If someone searches "Hilton Paris," the hotel name should matter far more than whether "Paris" appears in the amenities.

PostgreSQL solves this using weighted search fields:

ALTER TABLE hotels

ADD COLUMN fts_vector tsvector

GENERATED ALWAYS AS (

setweight(to_tsvector('english', coalesce(name, '')), 'A') ||

setweight(to_tsvector('english', coalesce(city, '')), 'A') ||

setweight(to_tsvector('english', coalesce(description, '')), 'B') ||

setweight(to_tsvector('english', coalesce(amenities, '')), 'C')

) STORED;Then add a GIN index for speed:

CREATE INDEX ix_hotels_fts

ON hotels

USING gin(fts_vector);Now your table supports fast ranked search directly inside PostgreSQL. No external system required.

Why Weighted Fields Matter

Not all text has equal meaning. Weight A is for primary fields (hotel name, airline name). Weight B for secondary (description, headlines). Weight C for supplementary (metadata, tags). Weight D exists for even lower-priority fields.

The beauty of weighted search is that it handles the ranking problem at the database layer, not the application layer. When someone searches "Paris," they almost always want hotels with "Paris" in the name — not hotels that happen to mention Paris in a guest review or amenity description.

That ranking logic dramatically improves result quality. Without it, search quickly feels noisy and irrelevant. You end up with hundreds of matches for a common term, most of them garbage. Weights force the database to surface the most semantically relevant results first.

PostgreSQL's ranking system scales this elegantly. You're not adding machine learning models or training ranking algorithms. You're just saying: name matches matter 4x more than description matches. That simple decision handles 95% of real-world search use cases.

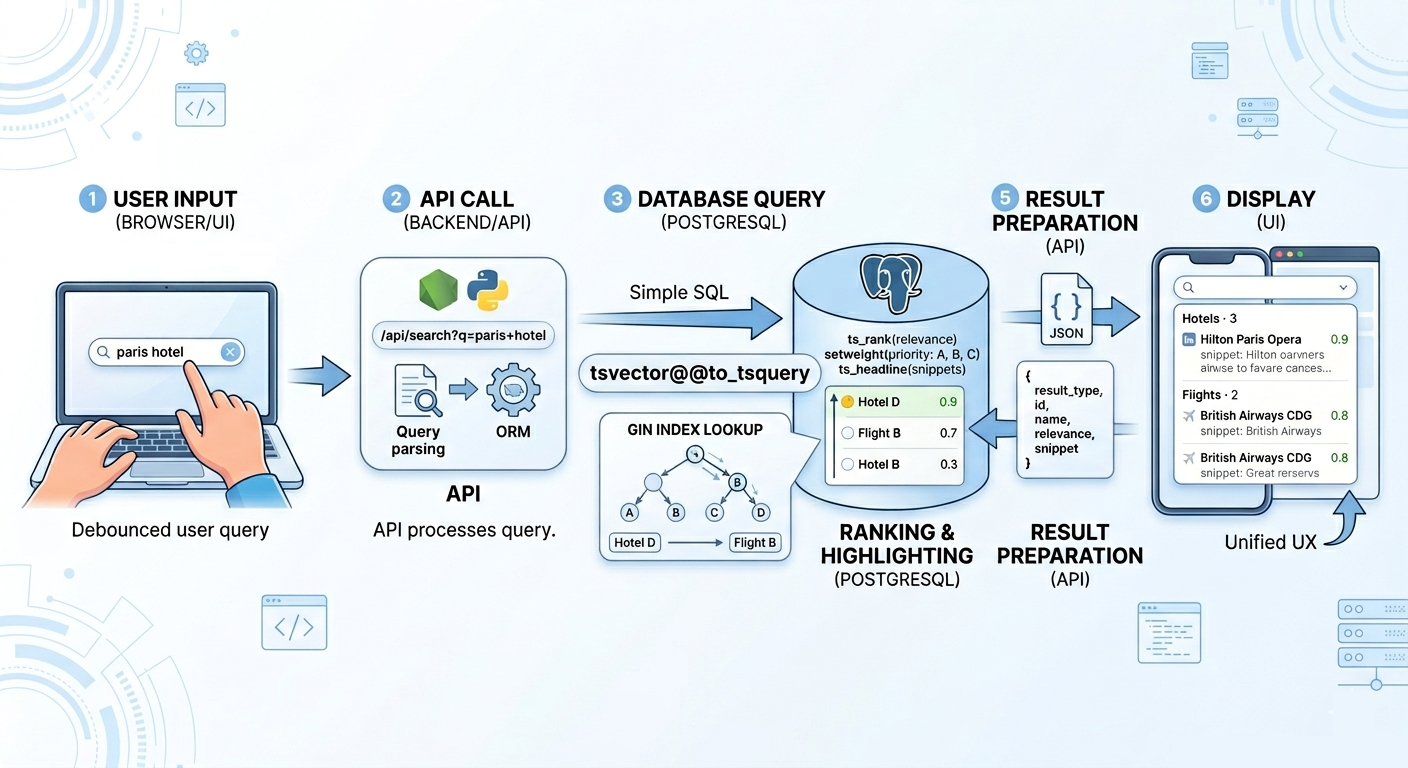

Step 2: The Search Endpoint

Once each table is searchable, the backend becomes straightforward. Your API endpoint accepts a query and searches multiple tables:

SELECT

'hotel' as result_type,

id,

name,

city,

ts_rank(fts_vector, query) as relevance,

ts_headline('english', description, query,

'StartSel=<mark>, StopSel=</mark>') as snippet,

query

FROM hotels,

websearch_to_tsquery('english', 'paris hotel') as query

WHERE fts_vector @@ query

ORDER BY relevance DESC

LIMIT 10;The key function here is ts_rank(), which returns a numeric score between 0 and 1 indicating how relevant a document is to the query. Documents where the search term appears in weighted A fields will rank much higher than those where it appears in C fields. PostgreSQL calculates this automatically.

Do the same for flights, packages, articles, then combine with UNION ALL:

(SELECT ... FROM hotels)

UNION ALL

(SELECT ... FROM flights)

UNION ALL

(SELECT ... FROM packages)

UNION ALL

(SELECT ... FROM support_articles)

ORDER BY relevance DESC

LIMIT 20;The important thing: every result shares a common structure (result_type, id, name, relevance, snippet). That allows the frontend to render a unified search experience regardless of source. The frontend doesn't need to know about ranking logic — it just displays results in the order they arrive.

In a real implementation, you'd likely wrap this in a stored procedure or parameterized query in your ORM. The complexity of the SQL is manageable because the hard work (tokenization, stemming, ranking) is delegated to the database.

PostgreSQL Handles Modern Syntax

The function websearch_to_tsquery() gives users Google-style search syntax out of the box.

Users can type:

"new york" hotel— phrase search (matches "new york" as a contiguous phrase)paris OR rome— boolean OR (matches hotels in either city)hotel -hostel— negation (matches hotels, but excludes hostels)luxury hotel near center— implicit AND (all words must appear)

PostgreSQL handles the parsing correctly. You're already winning on complexity. Most users expect this syntax and feel natural typing it. They don't expect a special query DSL or complex advanced search form.

The function intelligently handles edge cases: extra whitespace, leading/trailing operators, malformed queries. It degrades gracefully when users enter unexpected input. Your search box won't break on user error.

Step 3: Snippets and Highlights

One feature users expect is highlighted matches. PostgreSQL has this built in via ts_headline():

Output:

Luxury <mark>hotel</mark> near central <mark>Paris</mark>That tiny detail makes search feel dramatically more polished. And because the database generates the snippet, you avoid complicated frontend highlighting logic.

Step 4: Live Search UX

The best implementations are simple:

- User types

- Wait 300ms (debounce)

- Call

/api/search?q=... - Show grouped results

- Navigate on click

No dedicated page. No modal. No complexity. Just a fast search box.

Example grouping:

Hotels · 3

─────────────────────────────

Hilton Paris Opera

Marriott Canary Wharf

Premier Inn South Kensington

Flights · 2

─────────────────────────────

British Airways LHR → CDG

EasyJet STN → ORYPerformance Reality

With proper GIN indexes, full-text search performance is excellent:

- 10k rows: less than 10ms

- 100k rows: 20-30ms

- 1M rows: 50-100ms

For the majority of SaaS applications, that's completely acceptable. Users don't perceive latency below 100ms; it feels instant. And importantly: you achieve this without introducing another operational dependency.

A GIN index on fts_vector in a 1M-row table typically adds less than 10% to storage size. Storage is cheap. The performance gain more than offsets it. GIN indexes are also fast to maintain — PostgreSQL updates them incrementally as rows change, not in batch reindex jobs.

The real constraint hits much later. At 10M+ rows, search latency may start approaching 200-300ms, and you may need to consider sharding or eventual consistency. But that's a fantastic problem to have — it means you've grown your business significantly. Most teams never reach that scale for search.

And when you do, you have options: read replicas for search, dedicated read-only Postgres instances, or only then considering Elasticsearch. You don't have to make that bet upfront.

The Hidden Cost of Elasticsearch

Elasticsearch is powerful. But it also introduces a significant amount of complexity:

- Data synchronization — your Postgres data must stay in sync with your Elasticsearch index. This requires a sync layer: events, message queues, or polling.

- Reindexing workflows — when you change schema (add fields, change weights, update analyzers), you need jobs that rebuild indexes without downtime. This is the ops nightmare nobody talks about.

- Operational maintenance — monitoring, scaling, backups, upgrades. Elasticsearch is another database to operate, patch, and care for.

- Mapping migrations — changing how fields are indexed requires careful planning and often downtime if you get it wrong.

- Cluster scaling — coordinating nodes and shards becomes complex. You need to understand shard allocation, replica placement, and recovery workflows.

- Eventual consistency bugs — your search results may lag behind your source of truth. A user creates an object, gets a redirect, and can't find it in search. Suddenly you're debugging timing issues.

- Failure recovery — when Elasticsearch fails, you need fallback behavior. Do you fall back to database search? Do you return no results? How do you coordinate this with monitoring and alerting?

None of that complexity appears in the original product requirement. Teams inherit it accidentally. And early-stage products often pay that cost long before they receive meaningful value from it.

The trap is insidious because Elasticsearch works great once it's operating. The pain is all upfront: architecture, plumbing, operational learning curve. By the time you're live, you've already spent 2-3 months that could have been spent shipping features.

When PostgreSQL Wins

PostgreSQL full-text search is the right choice when:

- Your data already lives in Postgres — you get search for free, no sync layer required

- You need global search across business entities — hotels, flights, packages, articles, whatever. Postgres handles heterogeneous queries elegantly with UNION ALL.

- Your datasets are moderate in size — under a few million rows. Scale matters, but modern Postgres handles large datasets surprisingly well.

- You want fast implementation — days or weeks, not months. PostgreSQL's built-in tools are mature and well-documented.

- You want minimal infrastructure — one database instead of two. Your team deploys to one place, monitors one system, owns one operational domain.

- You care about operational simplicity — no cluster coordination, no shard management, no sync workers to debug at 3am.

- Your search patterns are relatively standard — keywords, phrases, boolean operators. If you need semantic search or vector similarity, Postgres can handle that too (pgvector), but that's a different problem.

That describes a surprisingly large percentage of modern SaaS products. A conservative estimate: 80% of teams reaching for Elasticsearch would be better served by PostgreSQL.

When Elasticsearch Actually Makes Sense

There absolutely are cases where Elasticsearch becomes the right tool. Usually when you need:

- Advanced typo tolerance — fuzzy matching, phonetic similarity

- Semantic/vector search — finding conceptually related results, not just keywords

- Massive-scale indexing — 100M+ documents where Postgres FTS becomes a bottleneck

- Complex aggregations — faceted search, nested filtering

- Multi-region search infrastructure — serving global users with local clusters

- Dedicated relevance engineering — A/B testing ranking algorithms

But most teams are nowhere near that stage when they first implement search. And designing for hypothetical scale too early is one of the fastest ways to slow product velocity.

The Bigger Lesson

The interesting part here isn't really about PostgreSQL. It's about resisting architectural overengineering.

A lot of engineering complexity comes from solving future problems that may never actually arrive. Global search sounds like a "big infrastructure problem." But for many applications, it's really just:

- Good indexing — tell the database which fields to search and how to tokenize them

- Good ranking — weight fields so that primary matches rank above secondary

- Good UX — show results instantly, highlight matches, render snippets with context

PostgreSQL already gives you the hard parts. The rest is product design.

The principle applies everywhere in architecture. Teams often assume they need sophisticated tooling because the problem sounds sophisticated. But most businesses operate at ordinary scale with ordinary constraints. Your search engine doesn't need to be a platform. Your metrics database doesn't need a time-series specialist. Your cache doesn't need a distributed store.

PostgreSQL, Redis, and a good primary database solve 95% of real-world infrastructure problems. The remaining 5% are genuinely difficult and warrant architectural complexity. But they're rare.

In practice, the simplest architecture is often the one that survives the longest. Build the feature your users need today. If it outgrows Postgres—great, you have a good problem to solve. But don't solve it in advance. You'll be right much more often if you resist the temptation to architect for scale you don't yet have.

Working through the challenges in this post? I help engineering leaders and CTOs navigate complex technical decisions and scale high-performing teams. Schedule a consultation →